Introduction

NOTE: An updated article is available here.This is a series of 4 articles aiming at giving a general guideline on how to deploy the Open Source Suricata IDPS on a high speed networks (10Gbps) in IDS mode using AF_PACKET , PF_RING or DNA.

Suricata is a high performance Network IDS, IPS and Network Security Monitoring engine. Open Source and owned by a community run non-profit foundation, the Open Information Security Foundation (OISF). Suricata is developed by the OISF and its supporting vendors.

In addition to that - we will make use of some of the great Suricata features and mainly we will compile in with GeoIP and file extraction support (extract files on the fly from traffic, based on their file type / file extension / file size/ file name/ MD5 hash ).

Further more Logstash / Kibana / Elasticsearch configuration and set up will be explored.

The articles in this series are comprised of:

Chapter I - Preparation

This chapter includes a general system description and basic set up tasks execution and tuning.

Chapter II - PF_RING / DNA

Part One - PF_RING

Part Two - DNA

This chapter includes two sections - PF_RING and DNA set up and configuration tasks.

Chapter III - AF_PACKET

This chapter includes AF_PACKET set up and configuration tasks.

Chapter IV - Logstash / Kibana / Elasticsearch

This chapter includes Logstash/Kibana/Elasticsearch set up and configuration tweaks - making use of the JSON log output available in Suricata.

Following these tutorials would not guarantee you 0 drops or a perfect set up. Every set up is unique based on a number of things including type of traffic, HW , rulesets used and much more.

Instead these sets of articles are intended for a general guide / reference and you should further adjust settings after you have gone through the initial deployment steps and analysis of your needs and traffic.

For this set of articles it is not mandatory to install Suricata with both AF_PACKET and PF_RING (or DNA) enabled. If you choose one or the other or both is entirely up to you. This article series does not aim to produce a performance comparison between AF_PACKET and PF_RING - again it is up to you and depending on your needs, environment and hardware to see which one works better for your setup.

Chapter I - Preparation

In Chapter I of this series of articles we would get a quick overview and basic info analysis of the OS system level, the traffic we are about to monitor and a quick/basic Suricata installation . We would also do a initial set up and prep of the system and the network card.

System's HW

CPU: One Intel(R) Xeon(R) CPU E5-2680 0 @ 2.70GHz (16 cores counting Hyperthreading)root@suricata:/# cat /proc/cpuinfoMemory: 64GB - 1600 MHz

processor : 0

vendor_id : GenuineIntel

cpu family : 6

model : 45

model name : Intel(R) Xeon(R) CPU E5-2680 0 @ 2.70GHz

stepping : 7

microcode : 0x70b

cpu MHz : 2701.000

cache size : 20480 KB

physical id : 0

siblings : 16

root@suricata:~# cat /proc/meminfoNetwork Card: Intel 82599EB 10-Gigabit SFI/SFP+

MemTotal: 65951532 kB

MemFree: 22508716 kB

Buffers: 1028 kB

Cached: 2251136 kB

SwapCached: 0 kB

04:00.0 Ethernet controller: Intel Corporation 82599EB 10-Gigabit SFI/SFP+ Network Connection (rev 01)

Subsystem: Intel Corporation Ethernet Server Adapter X520-2

Control: I/O+ Mem+ BusMaster+ SpecCycle- MemWINV- VGASnoop- ParErr- Stepping- SERR- FastB2B- DisINTx+

Status: Cap+ 66MHz- UDF- FastB2B- ParErr- DEVSEL=fast >TAbort- <TAbort- <MAbort- >SERR- <PERR- INTx-

Latency: 0, Cache Line Size: 64 bytes

Interrupt: pin A routed to IRQ 34

Region 0: Memory at fbc20000 (64-bit, non-prefetchable) [size=128K]

Region 2: I/O ports at e020 [size=32]

Region 4: Memory at fbc44000 (64-bit, non-prefetchable) [size=16K]

Capabilities: [40] Power Management version 3

System's OS

64 bit Ubuntu LTS 12.04.3root@suricata:/# uname -a

Linux suricata 3.2.0-39-generic #62-Ubuntu SMP Thu Feb 28 00:28:53 UTC 2013 x86_64 x86_64 x86_64 GNU/Linux

Suricata

We will use the current dev version of Suricata - 2.0dev (rev 92568c3) - at the moment of this writing.Network Traffic

There is no traffic alike.

Make sure you carefully analyze and select your HW (CPU, network cards,RAM, HDD, PCI bus type and speed) and deployment needs (which and what type of rules/rule set are you going to use).

Make sure you have an idea of how are you going to mirror the traffic. A good article on the subject of using a Network Tap or Port Mirror can be found HERE.

Make sure you know and analyze/investigate/profile for what kind of traffic/protocols, network and users/organization you will be doing the deployment.

It is important to point out that this is a set up for 10Gbps of traffic IDS monitoring of an ISP (Internet Service Provider) type of network backbone traffic.

Some of the tools you could use to get an idea of the traffic and necessary for the configuration part:

apt-get install ethtool bwm-ng iptraf

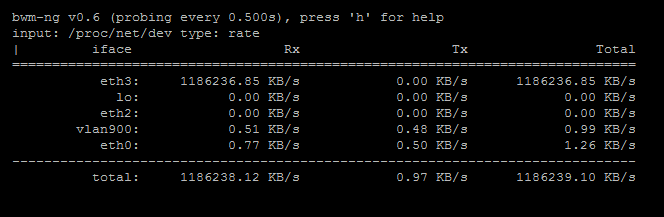

type bwm-ng , hit enter:

then press d:

tcpstat -i eth3 -o "Time:%S\tn=%n\tavg=%a\tstddev=%d\tbps=%b\n" 1

(substitute eth3 with your interface):

Or from the man pages of tcpstat that would mean:

n - %n' the number of packets

agv - %a' the average packet size in bytes

stddev - %d' the standard deviation of the size of each packet in bytes

bps - %b' the number of bits per second

-o - output formatAbout 1.5 mpps (million packets per second )

1 - poll every 1 second

iptraf - you could have a look around:

"statistical breakdowns" and "detailed interface statistics" -> TCP/UDP port, packet size, then sort

NOTICE: (None of the above 3 would work in DNA mode config and installation while Suricata is running on the same interface, explained/described in a later chapter)

Packages installation

General packages needed:apt-get -y install libpcre3 libpcre3-dbg libpcre3-dev \

build-essential autoconf automake libtool libpcap-dev libnet1-dev \

libyaml-0-2 libyaml-dev zlib1g zlib1g-dev libcap-ng-dev libcap-ng0 \

make flex bison git git-core subversion libmagic-dev libnuma-dev

For Eve (all JSON output):

apt-get install libjansson-dev libjansson4

For MD5 support(file extraction):

apt-get install libnss3-dev libnspr4-dev

For GeoIP:

apt-get install libgeoip1 libgeoip-dev

Network and system tools:

apt-get install ethtool bwm-ng iptraf htop

Installation and configuration

Suricata

Get the latest Suricata dev branch:git clone git://phalanx.openinfosecfoundation.org/oisf.git && cd oisf/ && git clone https://github.com/ironbee/libhtp.git -b 0.5.xCompile and install

./autogen.sh && ./configure --enable-geoip \NOTICE: If this is your first time installing Suricata make sure you do some basic setup tasks - rule downloads, directory set up, network range configuration - as described here. (or you can just use && sudo make install-full instead of && sudo make install above)

--with-libnss-libraries=/usr/lib \

--with-libnss-includes=/usr/include/nss/ \

--with-libnspr-libraries=/usr/lib \

--with-libnspr-includes=/usr/include/nspr \

&& sudo make clean && sudo make && sudo make install && sudo ldconfig

We will do a specific set up later in the articles , but you do need to have the basic set up done before.

Verify everything is in place , you can execute the following commands:

which suricata

suricata --build-info

ldd `which suricata`

Network card drivers and tuning

Our card is Intel 82599EB 10-Gigabit SFI/SFP+rmmod ixgbethen (we disable irqbalance and make sure it does not enable itself during reboot)

sudo modprobe ixgbe FdirPballoc=3

ifconfig eth3 up

killall irqbalance

service irqbalance stop

apt-get install chkconfigGet the Intel network driver form here (we will use them in a second) - https://downloadcenter.intel.com/default.aspx

chkconfig irqbalance off

Download to your directory of choice then unzip,compile and install:

tar -zxf ixgbe-3.18.7.tar.gzSet irq affinity - do not forget to change eth3 below with the name of the network interface you are using:

cd /home/pevman/ixgbe-3.18.7/src

make clean && make && make install

cd ../scripts/

./set_irq_affinity eth3

You should see something like this:

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ./set_irq_affinity eth3Now we have the latest drivers installed (at the time of this writing) and we have run the affinity script:

no rx vectors found on eth3

no tx vectors found on eth3

eth3 mask=1 for /proc/irq/101/smp_affinity

eth3 mask=2 for /proc/irq/102/smp_affinity

eth3 mask=4 for /proc/irq/103/smp_affinity

eth3 mask=8 for /proc/irq/104/smp_affinity

eth3 mask=10 for /proc/irq/105/smp_affinity

eth3 mask=20 for /proc/irq/106/smp_affinity

eth3 mask=40 for /proc/irq/107/smp_affinity

eth3 mask=80 for /proc/irq/108/smp_affinity

eth3 mask=100 for /proc/irq/109/smp_affinity

eth3 mask=200 for /proc/irq/110/smp_affinity

eth3 mask=400 for /proc/irq/111/smp_affinity

eth3 mask=800 for /proc/irq/112/smp_affinity

eth3 mask=1000 for /proc/irq/113/smp_affinity

eth3 mask=2000 for /proc/irq/114/smp_affinity

eth3 mask=4000 for /proc/irq/115/smp_affinity

eth3 mask=8000 for /proc/irq/116/smp_affinity

root@suricata:/home/pevman/ixgbe-3.18.7/scripts#

*-network:1

description: Ethernet interface

product: 82599EB 10-Gigabit SFI/SFP+ Network Connection

vendor: Intel Corporation

physical id: 0.1

bus info: pci@0000:04:00.1

logical name: eth3

version: 01

serial: 00:e0:ed:19:e3:e1

width: 64 bits

clock: 33MHz

capabilities: pm msi msix pciexpress vpd bus_master cap_list ethernet physical fibre

configuration: autonegotiation=off broadcast=yes driver=ixgbe driverversion=3.18.7 duplex=full firmware=0x800000cb latency=0 link=yes multicast=yes port=fibre promiscuous=yes

resources: irq:37 memory:fbc00000-fbc1ffff ioport:e000(size=32) memory:fbc40000-fbc43fff memory:fa700000-fa7fffff memory:fa600000-fa6fffff

We need to disable all offloading on the network card in order for the IDS to be able to see the traffic as it is supposed to be (without checksums,tcp-segmentation-offloading and such..) Otherwise your IDPS would not be able to see all "natural" network traffic the way it is supposed to and will not inspect it properly.

This would influence the correctness of ALL outputs including file extraction. So make sure all offloading features are OFF !

When you first install the drivers and card your offloading settings might look like this:

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -k eth3

Offload parameters for eth3:

rx-checksumming: on

tx-checksumming: on

scatter-gather: on

tcp-segmentation-offload: on

udp-fragmentation-offload: off

generic-segmentation-offload: on

generic-receive-offload: on

large-receive-offload: on

rx-vlan-offload: on

tx-vlan-offload: on

ntuple-filters: off

receive-hashing: on

root@suricata:/home/pevman/ixgbe-3.18.7/scripts#

So we disable all of them, like so (and we load balance the UDP flows for that particular network card):

ethtool -K eth3 tso off

ethtool -K eth3 ufo off

ethtool -K eth3 gro off

ethtool -K eth3 lro off

ethtool -K eth3 gso off

ethtool -K eth3 rx off

ethtool -K eth3 tx off

ethtool -K eth3 sg off

ethtool -K eth3 rxvlan off

ethtool -K eth3 txvlan off

ethtool -N eth3 rx-flow-hash udp4 sdfn

ethtool -N eth3 rx-flow-hash udp6 sdfn

ethtool -n eth3 rx-flow-hash udp6

ethtool -n eth3 rx-flow-hash udp4

ethtool -C eth3 rx-usecs 1

ethtool -C eth3 adaptive-rx off

Your output should look something like this:

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -K eth3 tso off

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -K eth3 gro off

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -K eth3 lro off

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -K eth3 gso off

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -K eth3 rx off

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -K eth3 tx off

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -K eth3 sg off

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -K eth3 rxvlan off

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -K eth3 txvlan off

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -N eth3 rx-flow-hash udp4 sdfn

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -N eth3 rx-flow-hash udp6 sdfn

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -n eth3 rx-flow-hash udp6

UDP over IPV6 flows use these fields for computing Hash flow key:

IP SA

IP DA

L4 bytes 0 & 1 [TCP/UDP src port]

L4 bytes 2 & 3 [TCP/UDP dst port]

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -n eth3 rx-flow-hash udp4

UDP over IPV4 flows use these fields for computing Hash flow key:

IP SA

IP DA

L4 bytes 0 & 1 [TCP/UDP src port]

L4 bytes 2 & 3 [TCP/UDP dst port]

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -C eth3 rx-usecs 1000

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -C eth3 adaptive-rx off

Now we doublecheck and run ethtool again to verify that the offloading is OFF:

root@suricata:/home/pevman/ixgbe-3.18.7/scripts# ethtool -k eth3

Offload parameters for eth3:

rx-checksumming: off

tx-checksumming: off

scatter-gather: off

tcp-segmentation-offload: off

udp-fragmentation-offload: off

generic-segmentation-offload: off

generic-receive-offload: off

large-receive-offload: off

rx-vlan-offload: off

tx-vlan-offload: off

So in general we are done with the preparation of the system. The next chapter will explain PF_RING / DNA specific configuration in the suricata.yaml and the system in general.

Great, cant wait for next chapter....

ReplyDeleteApart from procedure overhead that the TCP offload can address and handle, this also capable to address other issues and concern pertaining to architectural which can affect big portion of the based endpoints the server and PC.

ReplyDeleteThanks for sharing nice information..

Full TCP offload

The whole point of the offloading is to be disabled in this particular tutorial so that the correctness of the traffic inspection, reassembly and defragmentation can be ensured.

Delete